Found 22 results

Open Access

Article

05 March 2026Light-Guided Autonomous Drone Navigation for Indoor GPS-Denied Environments

Autonomous drones operating in indoor environments cannot rely on the global positioning system (GPS) signals for precise navigation due to severe signal attenuation and multipath interference in GPS-denied spaces. This paper presents a novel Li-Fi-based optical positioning, and combined with high-sensitivity photodiode sensor arrays, to enable robust drone guidance in challenging indoor environments where conventional radio-frequency localization fails. The proposed system uses strategically distributed ceiling-mounted Light Emitting Diode (LED) luminaires across the operational space, each transmitting unique identification codes through high-frequency light modulation at rates imperceptible to human vision, thereby maintaining dual functionality for simultaneous illumination and positioning. Unlike existing VLC positioning studies that focus on static receivers, our system integrates real-time optical localization directly into the UAV control loop at 120 Hz, achieving closed-loop autonomous navigation without GPS or RF assistance. The system demonstrates sub-decimetric positioning accuracy (<8 cm), low latency (4.2 ms), and operates successfully on resource constrained micro-UAV platforms (250 g quadcopter with STM32 microcontroller. OpenELAB Technology Ltd., Garching bei München, Germany). Experimental validation includes complex 3D trajectory tracking, multi-room scalability analysis, and quantitative comparison with existing localization technologies, confirming the viability of Li-Fi guided autonomous flight for practical indoor application.

Open Access

Article

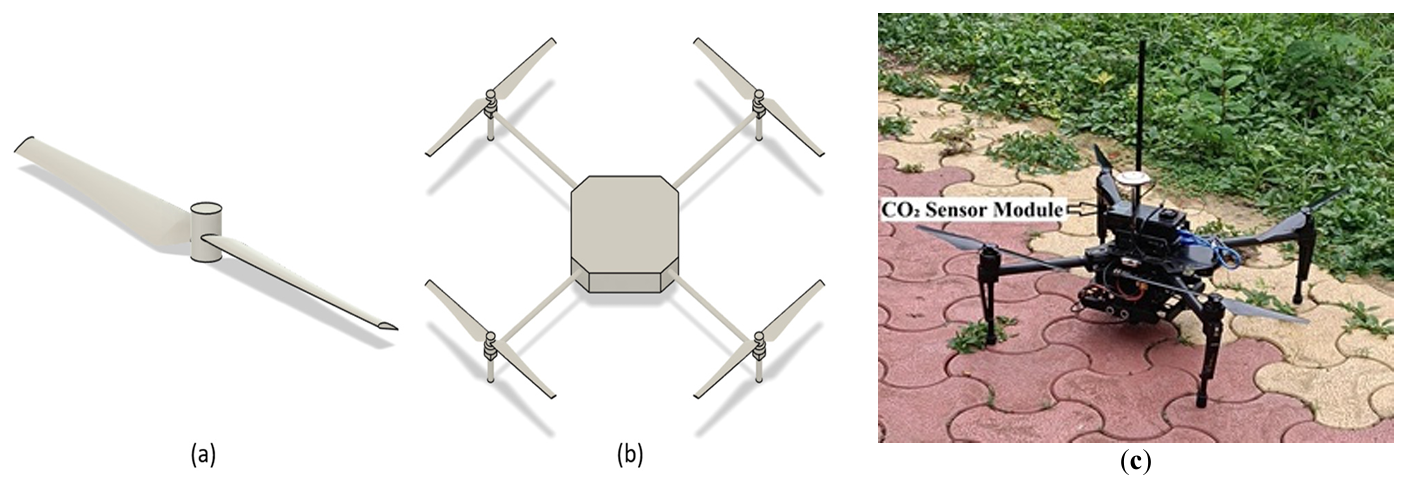

04 February 2026Analysis of Sensor Locations in Drone Aided Environmental Monitoring System Using Computational Fluid Dynamics (CFD) Studies

Recent advancements in unmanned aerial vehicle (UAV) technology have enabled flexible, high-resolution monitoring of atmospheric CO2, particularly in complex or otherwise inaccessible environments. This study employs Computational Fluid Dynamics (CFD) to investigate the downwash flow field of a quadcopter UAV in hover condition with the objective of identifying low-disturbance regions suitable for accurate atmospheric sensor placement. A quadcopter model was simulated using the SST k-ω turbulence model. Simulations were performed at rotor speeds ranging from 1000 to 6000 rpm. Results show that the strongest downwash and turbulence occur directly beneath the rotors, while airflow above the central fuselage and regions laterally distant from the rotors remain significantly calmer. The findings strongly recommend placing gas sensors either above the drone body or sufficiently far horizontally from the rotor plane to minimize measurement errors caused by propeller-induced flow.

Open Access

Article

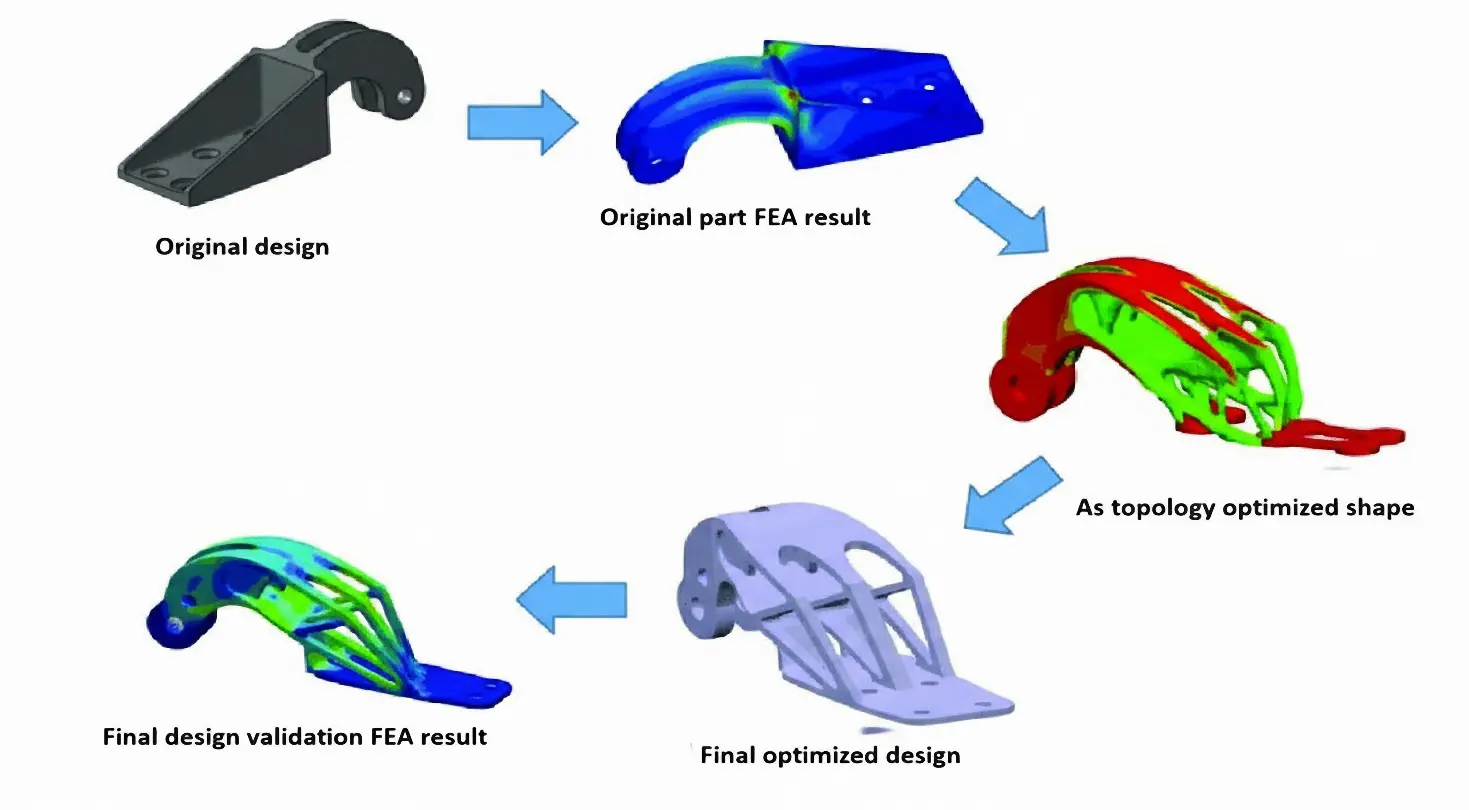

02 February 2026Topology Optimization for Drone Structure: Comprehensive Workflow Including Conceptual Modeling, Components Preparation and Additive Manufacturing

Payload drones are often limited more by frame weight than by motor power. This work aims to design, optimize, and validate a flat octocopter frame with eight independently driven rotors arranged symmetrically on separate arms. The drone frame design in SOLIDWORKS uses Finite Element Analysis (FEA) and topology optimization to remove material from low-stress regions while keeping the main load paths intact. The final design cuts the frame mass by 37.3% compared to the baseline model and reduces the 3D printing time by about five hours using a Creality K1C printer with Polylactic Acid (PLA) filament. These changes increase the available thrust-to-weight margin for payload without exceeding the allowable stress or deformation limits of the material. The electronic components also identified compatible flight controllers, ESCs, motors, and radio systems to show that the proposed frame can be integrated into a complete multirotor platform. Overall, this work demonstrates a practical approach to designing lighter octocopter frames that are easier to 3D print and can be used more effectively for delivery and inspection missions.

Open Access

Article

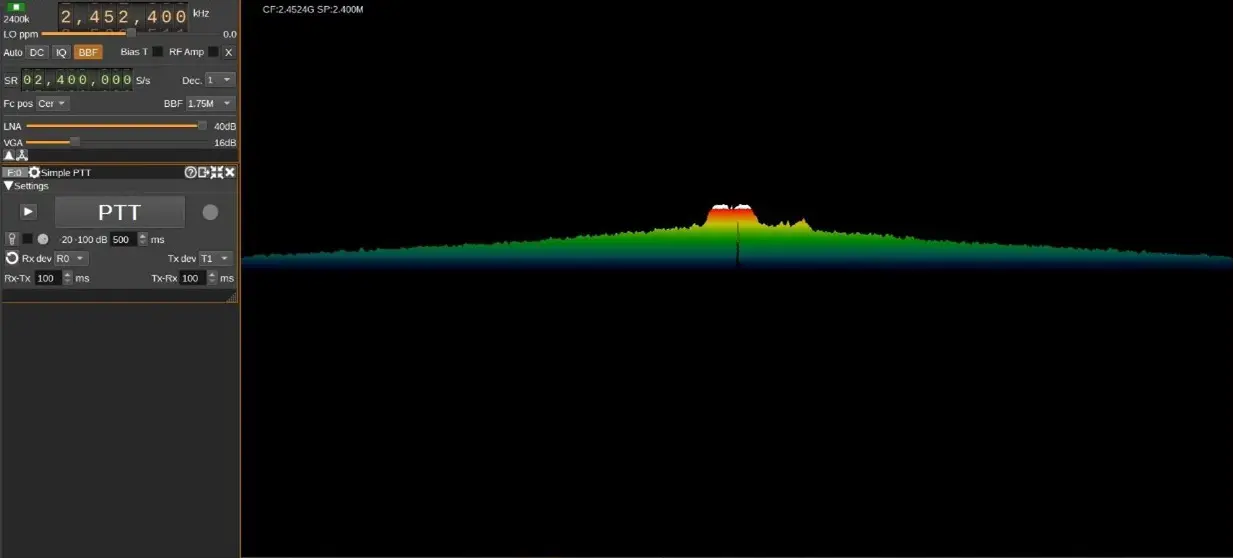

30 October 2025Smart Drone Neutralization: AI Driven RF Jamming and Modulation Detection with Software Defined Radio

The increasing use of wireless technologies in many aspects of people’s lives has led to a congested electromagnetic spectrum, making it critical to manage the limited available spectrum as efficiently as possible. This is particularly important for military activities such as electronic warfare, where jamming is used to disrupt enemy communication, self-attacking drones, and surveillance drones. However, current detection methods used by armed personnel, such as optical sensors and Radio Detection and Ranging (RADAR), do not include Radio Frequency (RF) analysis, which is crucial for identifying the signals used to operate drones. To combat security vulnerabilities posed by the rogue or unidentified transmitters, RF transmitters should be detected not only by the available data content of broadcasts but also by the physical properties of the transmitters. This requires faster fingerprinting and identifying procedures that extend beyond the traditional hand-engineered methods. In this paper, RF data from the drones’ remote controller is identified and collected using Software Defined Radio (SDR), a radio that employs software to perform signal-processing tasks that were previously accomplished by hardware. A deep learning model is then provided to train and detect modulation strategies utilized in drone communication and a suitable jamming strategy. This paper overviews Unmanned Aerial Vehicles (UAV) neutralization, communication signals, and Deep Learning (DL) applications. It introduces an intelligent system for modulation detection and drone jamming using Software Defined Radio (SDR). DL approaches in these areas, alongside advancements in UAV neutralization techniques, present promising research opportunities. The primary objective is to integrate recent research themes in UAV neutralization, communication signals, and Machine Learning (ML) and DL applications, delivering a more efficient and effective solution for identifying and neutralizing drones. The proposed intelligent system for modulation detection and jamming of drones based on SDR, along with deep learning approaches, holds great potential for future research in this field.

Open Access

Article

27 October 2025Interacting Multiple Model Adaptive Robust Kalman Filter for Position Estimation for Swarm Drones under Hybrid Noise Conditions

This study evaluates the Interacting Multiple Model Adaptive Robust Kalman Filter (IMM-ARKF) for accurate position estimation in a leader-follower swarm of nine drones, consisting of one leader and eight followers following distinct trajectories. The evaluation is conducted under hybrid noise conditions combining Gaussian and Student’s t-distributions at 10%, 30%, and 50% ratios. The IMM-ARKF, which relies solely on its adaptive robust filtering mechanism, is compared with standard Interacting Multiple Model Kalman Filter (IMM-KF) and Extended Kalman Filter (IMM-EKF) methods. Simulations show that IMM-ARKF provides better accuracy, reducing root mean square error (RMSE) by up to 43.9% compared to IMM-EKF and 34.9% compared to IMM-KF across different noise conditions, due to its ability to adapt to hybrid noise. However, this improved performance comes with a computational cost, increasing processing time by up to 148% compared to IMM-EKF and 92.1% compared to IMM-KF, reflecting the complexity of its adaptive approach. These results demonstrate the effectiveness of IMM-ARKF in enhancing navigation accuracy and robustness for multi-drone systems in challenging environments.

Open Access

Article

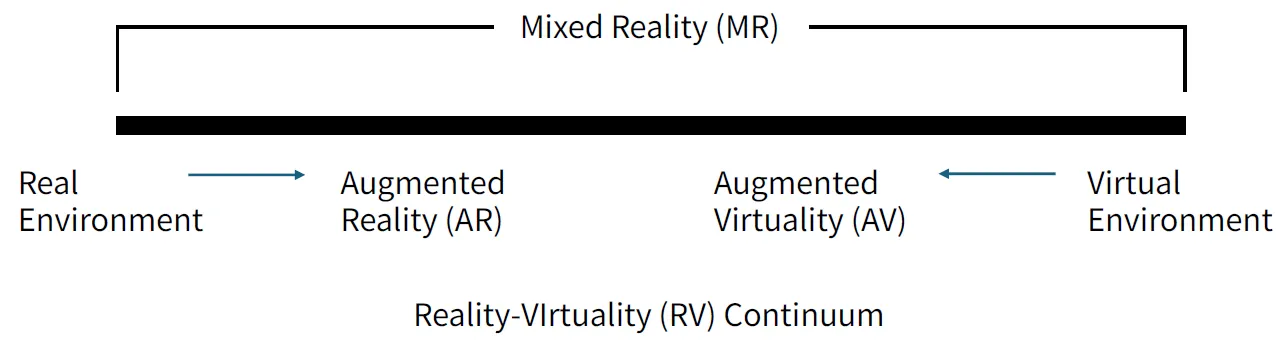

23 September 2025A Survey on XR-Based Drone Simulation: Technologies, Applications, and Future Directions

This paper presents a comprehensive survey of Extended Reality (XR)-based drone simulation systems, encompassing their architectures, simulation engines, physics modeling, and diverse training applications. With a particular focus on manual multirotor drone operations, this study highlights how Virtual Reality (VR) and Augmented Reality (AR) are increasingly vital for pilot training and mission rehearsal. We classify these simulators based on their hardware interfaces, spatial computing capabilities, and the integration of game and physics engines. We analyze specific platforms such as Flightmare, AirSim, DroneSim, Inzpire Mixed Reality UAV Simulator, and SimFlight XR are analyzed to illustrate various design strategies, ranging from research-grade modular frameworks to commercial training tools. In this paper, we also examine the implementation of spatial mapping and weather modeling to enhance realism in AR-based simulators. Finally, we identify critical challengesthat remain to be addressed, including user immersion, regulatory alignment, and achieving high levels of physical realism, and propose future directions in which XR-integrated drone training systems can advance.

Open Access

Article

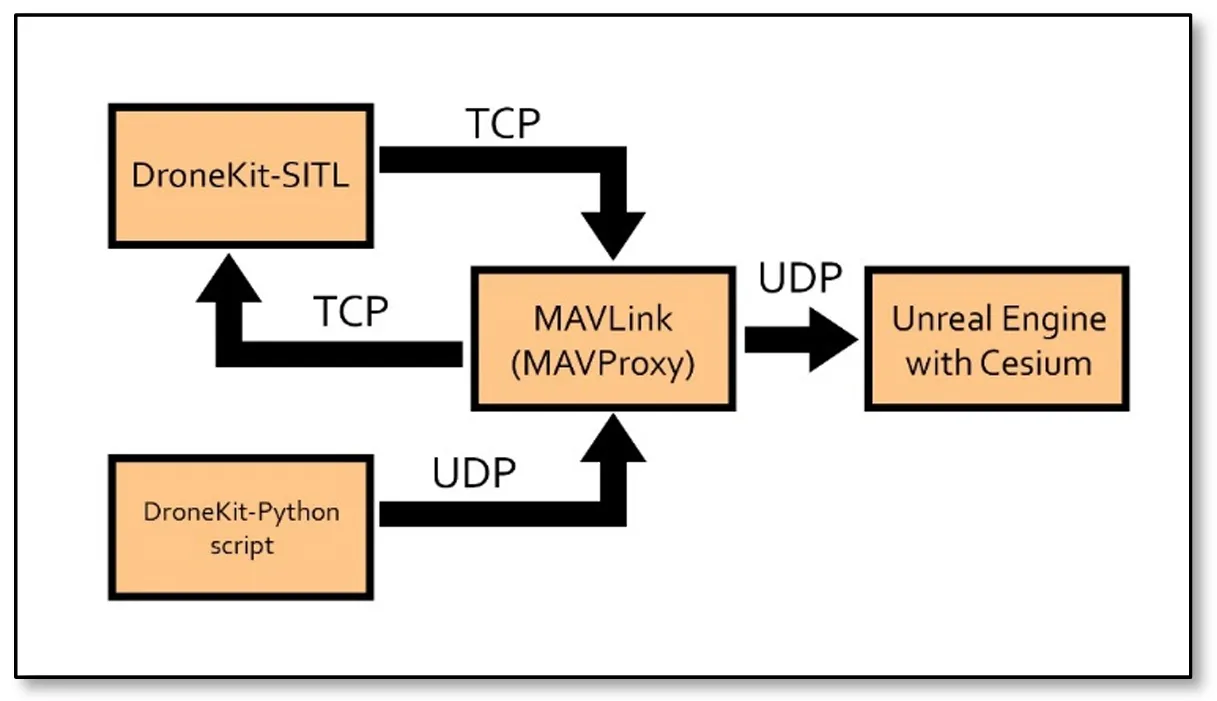

17 September 2025An Approach to Simulation & Navigation of Autonomous Unmanned Aerial Vehicle in 3D

Drone simulation refers to the emulation of Unmanned Aerial Vehicles (UAVs) in a virtual environment, replicating real-world conditions to study and test the behavior, performance, and functionalities of drones. This paper explores the simulation of UAVs in the Unreal Engine environment using MAVProxy (Micro Air Vehicle Proxy) and the Python library DroneKit. By leveraging the computational capabilities of computers, this approach enables precise visualization and control of UAV flight dynamics in three dimensions. The use of Blueprints in Unreal Engine facilitates a cost-effective and accessible simulation process, allowing engineers and scientists to refine their UAV designs before real-world deployment. Results show the applicability of this approach vs. different environments, where an alternative approach also emerges as a viable option for visualizing textured buildings. This approach shows the power of open-source collaboration in advancing innovative solutions in the dynamic field of science and technology.

Open Access

Article

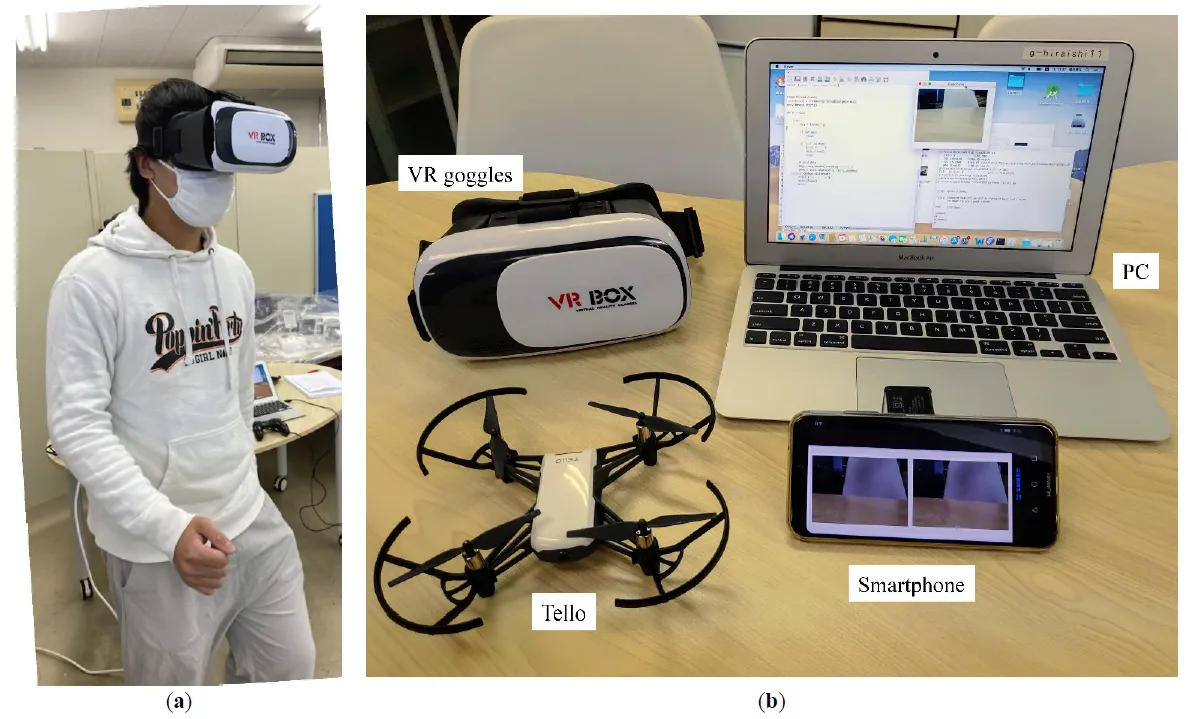

12 May 2025Drone Operation with Human Natural Movement

This study proposes a method for operating drones using natural human movements. The operator simply wears virtual reality (VR) goggles. An image from the drone camera was displayed on the goggles. When the operator changes the direction of his or her face, the drone changes the direction to match that of the operator. When the operator moves their head up or down, the drone rises or falls accordingly. When the operator walks in place, rather than walking, the drone moves forward. This allows the operator to control the drone as if they were walking in the air. Each of these movements was detected by the values of the acceleration and magnetic field sensors of the smartphone mounted on the VR goggles. A machine learning method was adopted to distinguish between walking and non-walking movements. Compared with operation via conventional remote control, it was observed that the remote controller performed better than the proposed approach in the early stages. However, when the participants familiarized themselves with the natural operation, these differences became relatively small. This study combined drones, VR, and machine learning. VR provides drone pilots with a sense of realism and immersion, whereas machine learning enables the use of natural movements.

Open Access

Article

07 April 2025Evaluating a Motion-Based Region Proposal Approach with Background Subtraction Methods for Small Drone Detection

The detection of drones in complex and dynamic environments poses significant challenges due to their small size and background clutter. This study aims to address these challenges by developing a motion-based pipeline that integrates background subtraction and deep learning-based classification to detect drones in video sequences. Two background subtraction methods, Mixture of Gaussians 2 (MOG2) and Visual Background Extractor (ViBe), are assessed to isolate potential drone regions in highly complex and dynamic backgrounds. These regions are then classified using the ResNet18 architecture. The Drone-vs-Bird dataset is utilized to test the algorithm, focusing on distinguishing drones from other dynamic objects such as birds, trees, and clouds. By leveraging motion-based information, the method enhances the drone detection process by reducing computational demands. Results show that ViBe achieves a recall of 0.956 and a precision of 0.078, while MOG2 achieves a recall of 0.857 and a precision of 0.034, highlighting the comparative advantages of ViBe in detecting small drones in challenging scenarios. These findings demonstrate the robustness of the proposed pipeline and its potential contribution to enhancing surveillance and security measures.

Open Access

Article

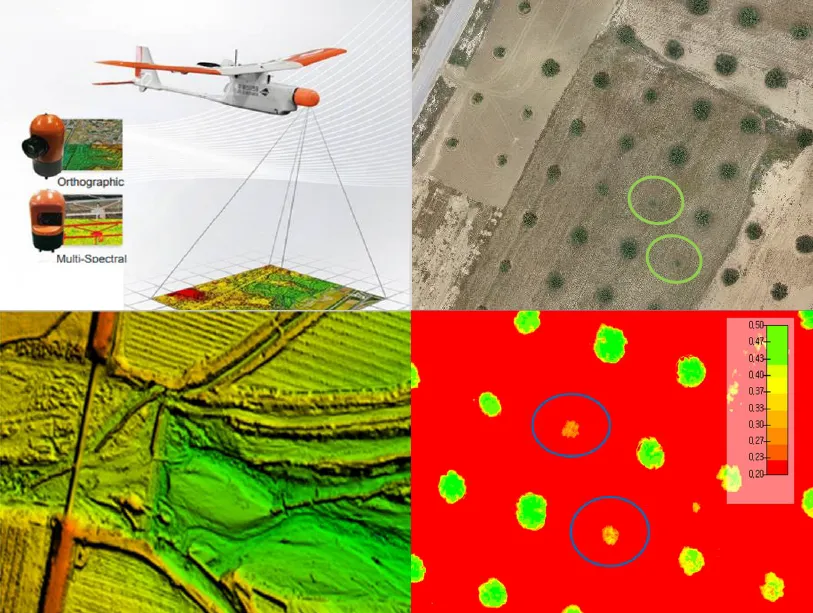

10 March 2025Leveraging Drone Technology for Precision Agriculture: A Comprehensive Case Study in Sidi Bouzid, Tunisia

The integration of drone technology in precision agriculture offers promising solutions for enhancing crop monitoring, optimizing resource management, and improving sustainability. This study investigates the application of UAV-based remote sensing in Sidi Bouzid, Tunisia, focusing on olive tree cultivation in a semi-arid environment. REMO-M professional drones equipped with RGB and multispectral sensors were deployed to collect high-resolution imagery, enabling advanced geospatial analysis. A comprehensive methodology was implemented, including precise flight planning, image processing, GIS-based mapping, and NDVI assessments to evaluate vegetation health. The results demonstrate the significant contribution of UAV imagery in generating accurate land use classifications, detecting plant health variations, and optimizing water resource distribution. NDVI analysis revealed clear distinctions in vegetation vigor, highlighting areas affected by water stress and nutrient deficiencies. Compared to traditional monitoring methods, drone-based assessments provided high spatial resolution and real-time data, facilitating early detection of agronomic issues. These findings underscore the pivotal role of UAV technology in advancing precision agriculture, particularly in semi-arid regions where climate variability poses challenges to sustainable farming. The study provides a replicable framework for integrating drone-based monitoring into agricultural decision-making, offering strategies to improve productivity, water efficiency, and environmental resilience. The research contributes to the growing body of knowledge on agricultural technology adoption in Tunisia and similar contexts, supporting data-driven approaches to climate-smart agriculture.